What should managers do with their Analytics function?

Whilst at Measure Camp a couple of weeks ago I attended one session entitled ‘Does anyone outside this room care about Web Analytics?’ At it, I posited that many people’s dissatisfaction with organisations was just a misinterpretation of a maturity model put forward by Stephane Hamel: they were actually just seeing the impact of deficiencies in other areas and that managers were getting the brunt of it. I was, however, rebuffed on this somewhat. Whilst discussing this in the office a couple of days later I realised the reason why. Managers would not be doing their job properly if they were the most advanced of all the disciplines.

So here we have the big guide to how you should manage analytics if it is your responsibility. Previously when talking about the maturity model, I did a post on the sorts of things that I thought you should know if you were a Web Analyst.

This time I’m not going to assume you know anything about analytics, but I’m going to assume you can work out where you are as an organisation by putting into place the model. But before we do that, here are the basics of the maturity model:

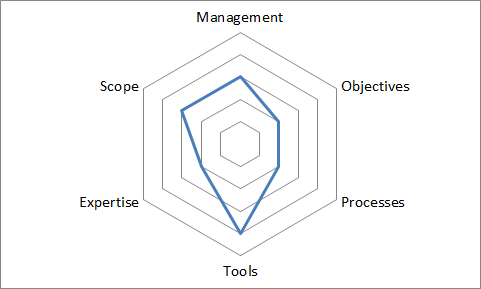

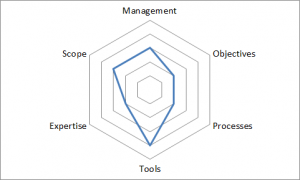

The model takes six facets of analytics that would lead an organisation to be able to optimise in the most efficient manner. You then work out how mature you are in each area, on a scale of 0 – 5, and it leads you into a little graph like the one above.

If you are equal in all areas then you are optimising in an efficient manner, but you could be getting better value for your money by being more mature. If one of your arms is more mature than the other then you will be wasting money by not being able to use it to its full potential.

The classic examples are: buying a tool that is all singing and all dancing whilst not having anyone in place to look at the data; or not having the processes in place to enact the recommendations that your tool and experts recommend because you need to wait 12 months for the next development cycle.

So what are the six facets?

- Are you optimising with respect to your business objectives? Whilst this seems like a yes/no question, rather than a 0 – 5 scale, but really it isn’t. You can go from nothing to being simple channel objectives, or overall organisational objectives.

- Do you have the processes in place to make the recommendations that the analytics suggests a reality? This is a a scale from a large waterfall process through Agile to multivariate testing on the fly (and it doesn’t just have to refer to the technical side, but also to your marketing or organisational processes)

- Do you have tools in place to give you the right levels of data? We quite often call this ‘Data and Tools’ because you can have a great tool that gives you crap data. The scale here goes from nothing through basic tools, basic reports through to fully integrated report sets for all levels of the organisation.

- Do you have people on the ground to do the analytics? Avinash always recommends the 90/10 rule (for every £10 you spend on technology, you should spend £90 on people doing stuff with the data). The scale runs from nobody, through part time work, through multidisciplinary teams to experienced and empowered individuals.

- What are the things that you do analytics on? This can start as something as simple as a single tactic (eg email) through to the whole web presence and finally all the way up into multichannel.

- Finally, where does the responsibility for this sits in a management team? Does it sit with a project manager or does it go all the way up to the top of the organisation.

- It would be remiss of me, as the head of a function at a consultancy that I didn’t say ‘Hire a consultancy’. But I think this is a route that only people who are very serious about implementing recommendations that a consultancy make. These changes are likely to be organisational and they are likely to impact your job. The price won’t be cheap. What you will get is a company that will ‘interview’ various members of your team and those around you to find out what you do on a day to day basis. They will look at the implementation of your tools. They’ll look at your reports. They’ll look at the decisions that you make from those reports. Then they’ll workshop what you should do to bring yourself up to a level playing field. It will have credibility because all teams will have been involved in setting up a series of actions and a timeline of when you want them to be in place.

- You can do the above in an internal manner. You’ll get a biased view and you may lose credibility of the results because they’ll seem to be sponsored by one part of the organisation.

- You can do a simplified version of the above. I quite often do this with teams that I work with – we’ll sit in a room with a couple of people who work with a client, I’ll go through the levels and we’ll work out where we think an organisation is. Then we’ll come up with a couple of simple ideas that will push the organisation up in an area we think they’re deficient in. It stops us just repeatedly offering the same service over and over where it might not be applicable.

- You can listen to snake oil salesmen whose job it is to sell you their product (I jest, but quite often you’ll find companies try and push a product, particularly tools, when the reality is that isn’t the reason that you aren’t taking advantage of what you currently have).

Thanks Alec – you are absolutely right on all points in this post. I’m really happy (and honoured!) to see my work being used and applied.

Your points about doing it internally or leveraging an external expert is absolutely right. Some time ago someone who tried to do it on his own confidentially shared the story with me that it didn’t work out very well for him… you can’t preach in your own backyard syndrome…

The concept is heavily rooted in change management. Politics and resistance can come in the way if you try to do it on your own – while an “outsider” can convey the same message (and more) in a different way while avoiding to put the internal analyst on the spot and at risk.