Log file analysis is more important than ever

I was going to write this post a couple of weeks ago when I stumbled across Paul Holstein’s post on 404 error pages and how to find them. Then I noticed from the comments on that post that June Dershewitz had also written a post on how to find broken links. And then, to cap it all off, I have been doing some work on log file analysis for a slightly different reason, so I thought it was just about time that I wrote about it and described what you could do and what you couldn’t do.

What are log files?

When your browser requests a page from a website, the server that you are requesting it from can keep a log of your request. It will log everything that you request from the page: the page itself, the images, the css files, the javascript files, etc.

What do log files look like?

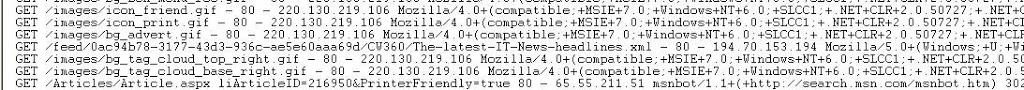

Well effectively they are just long text files with a load of information in them. You have a line for each request from the server. They look a bit like the one below (although you obviously can’t see all of it).

What information is contained in a log file?

Log files can contain lots of information, including anything that is included in your browsers header or that is on the server. Here are the important ones:

- The file requested

- The users IP address

- The users useragent (browser and Operating system combination)

- The users referer for that file (the page that the file was loaded from)

- The status of the request (was it successful – 200, was it a failure – 404, or was it redirected to another place – 301, for example)

- Any cookies that the server has given the user

How do log files differ from javascript page tags?

HBX, Google Analytics, SiteCatalyst, etc all run on javascript page tags. When the user loads the page they load the javascript. This javascript in turn loads from the suppliers server a file that contains all the information about the user (it is collected from the parameters in the tag on the page). This means that if you don’t run javascript, you won’t be included in the data that the third party supplier is giving you.

Similarly, log file analysis suffers from caching issues. That is, your browser (or your ISPs server) may store a page that you have viewed, so that next time you view it the browser doesn’t request the page from the website, but can just request it from its own memory. This means that you may miss out on lots of page views and visits if you are only looking at what is requested from the server.

What are the advantages of log files?

Log files offer one major advantage over normal page tagging solutions – they measure things that don’t run javascript. This means that you get lots of lovely information about robots and spiders, which crawl all our pages on a regular basis.

What can you use log files for?

404 Error pages:

As Paul and June mention in their blog posts, one of the most common uses for log files is to search out 404 errors. What you do is search your log files for any instances where someone tried to request a page, but failed to get it (status code 404). This will lead you to be able to see the page that the user was requesting and why they didn’t get it. It will also show you the page the user was on when they clicked on the link to get to that page, so you can check if the link is broken.

Files not being found:

Log files aren’t limited to pages, they also contain information on every file that has been requested, so this can be a useful way of finding out if you are linking to any broken images, css files and RSS feeds (again from the 404 errors). This could be causing the user experience to be slower or indeed compromised.

Search engine Spiders:

Search engine spiders will regularly crawl the site looking for new content and links to other content. If your pages aren’t being indexed in Google or Yahoo! and you want to find out why, you can look in your log files to see if those pages have been crawled by the search engine. If they have, is there another technical reason (eg nofollow, noindex, etc)? If they haven’t been crawled, why haven’t they been crawled (robots.txt, lack of links, lack of sitemap, etc).

Rogue Automated Robots:

If your site is being hit by a DNS attack (where someone hammers your site so that it breaks the servers), you can find a lot of information out about them by looking in the log files. This could lead you to block them from accessing the site on the basis of their IP address. It could also help you filter out from your third party systems objects which are skewing your stats.

From log file analysis we have often found out several errors on the site that weren’t being picked up by users, but were being picked up by search engine spiders. We can then alter the site in a way that the user won’t notice the difference, but that the search engine will get a cleaner site and won’t get confused. We have also used it to pick up broken links that were never clicked on by users, but were fetched by search engine spiders and were losing us link juice.

There are many ways of analysing your log files. One popular way is to download a free tool (like AWStats) and then run your log files through the system. They will then be processed and given back to you in a dashboard. Note that this can take serious time. Your log files are BIG. They contain every file that was downloaded from your server. That will be a lot of files. Probably 10+ for each page. If you servers are load balanced, you may have more than one file. Sometimes it may just be a bit easier to stick them into text pad and do it yourself by searching for the 404 errors (or writing a little macro that can split them out).

Nice. This topic isn’t discussed enough with all the current fuss over the tagging approach.